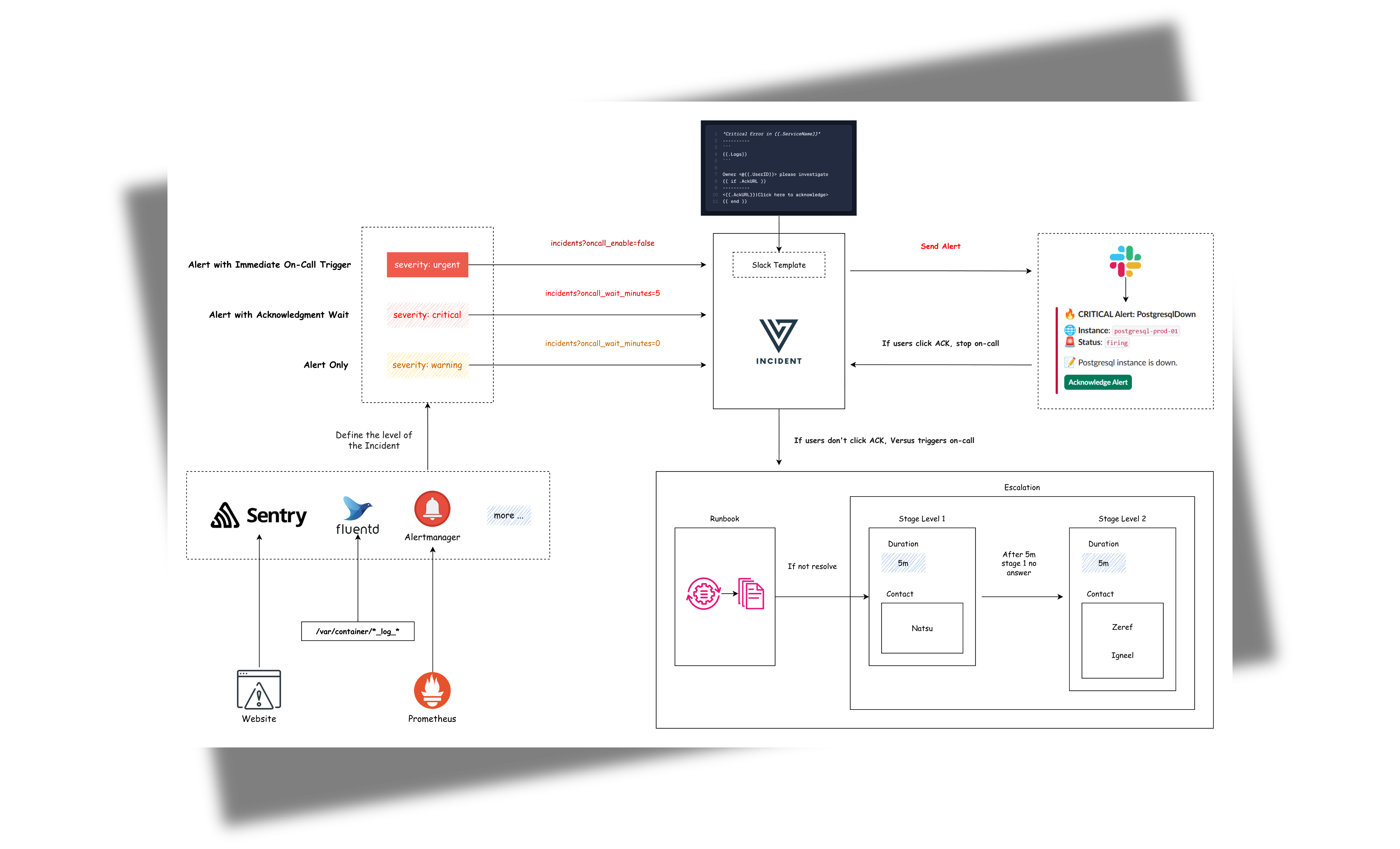

An incident management tool that supports alerting across multiple channels with easy custom messaging and on-call integrations. Compatible with any tool supporting webhook alerts, it's designed for modern DevOps teams to quickly respond to production incidents.

With the built-in AI SRE Agent, Versus goes further — continuously observing your logs, metrics, and traces, learning what normal looks like, and alerting you only when something new and unexpected appears.

Features

- 🚨 Multi-channel Alerts: Send incident notifications to Slack, Microsoft Teams, Telegram, and Email (more channels coming!)

- 📝 Custom Templates: Define your own alert messages using Go templates

- 🔧 Easy Configuration: YAML-based configuration with environment variables support

- 📡 REST API: Simple HTTP interface to receive alerts

- 📡 On-call: On-call integrations with AWS Incident Manager

- 🤖 AI Agent (Beta): An AI SRE agent that reads your logs, metrics and tracing, learns what normal looks like, and only alerts you when something new and unexpected appears.

Roadmap

See ROADMAP.md for the full list of shipped features, work in progress, and planned phases (more log sources, metrics, traces, cross-signal correlation).

Support The Project

GitHub Sponsors · see SPONSORS.md

Contributing

Contributions are welcome. Please read CONTRIBUTING.md for development setup, coding standards, and the PR process, and review the Code of Conduct and security policy before reporting vulnerabilities.

Project governance is documented in GOVERNANCE.md.

License

Distributed under the MIT License. See LICENSE for more information.

Getting Started

Table of Contents

- Prerequisites

- Easy Installation with Docker

- Admin Dashboard

- Storage Backend

- Universal Alert Template Support

- Development Custom Templates

- SNS Usage

- AI Agent

- On-Call

Prerequisites

- Docker 20.10+ (optional)

- Slack workspace (for Slack notifications)

- A

GATEWAY_SECRETvalue of your choosing (required if you want to use the admin dashboard)

Easy Installation with Docker

docker run -p 3000:3000 \

-e GATEWAY_SECRET=change-me \

-e SLACK_ENABLE=true \

-e SLACK_TOKEN=your_token \

-e SLACK_CHANNEL_ID=your_channel \

ghcr.io/versuscontrol/versus-incident

Versus listens on port 3000 by default and exposes:

POST /api/incidents— webhook endpoint for monitoring tools.GET /— the embedded admin dashboard, open http://localhost:3000/ in your browser. For the full UI walkthrough and the build/watch scripts, see Admin Dashboard.

Universal Alert Template Support

Our default template automatically handles alerts from multiple sources, including:

- Alertmanager (Prometheus)

- Grafana Alerts

- Sentry

- CloudWatch SNS

- FluentBit

Example: Send an Alertmanager alert

curl -X POST "http://localhost:3000/api/incidents" \

-H "Content-Type: application/json" \

-d '{

"receiver": "webhook-incident",

"status": "firing",

"alerts": [

{

"status": "firing",

"labels": {

"alertname": "PostgresqlDown",

"instance": "postgresql-prod-01",

"severity": "critical"

},

"annotations": {

"summary": "Postgresql down (instance postgresql-prod-01)",

"description": "Postgresql instance is down."

},

"startsAt": "2023-10-01T12:34:56.789Z",

"endsAt": "2023-10-01T12:44:56.789Z",

"generatorURL": ""

}

],

"groupLabels": {

"alertname": "PostgresqlDown"

},

"commonLabels": {

"alertname": "PostgresqlDown",

"severity": "critical",

"instance": "postgresql-prod-01"

},

"commonAnnotations": {

"summary": "Postgresql down (instance postgresql-prod-01)",

"description": "Postgresql instance is down."

},

"externalURL": ""

}'

Example: Send a Sentry alert

curl -X POST "http://localhost:3000/api/incidents" \

-H "Content-Type: application/json" \

-d '{

"action": "created",

"data": {

"issue": {

"id": "123456",

"title": "Example Issue",

"culprit": "example_function in example_module",

"shortId": "PROJECT-1",

"project": {

"id": "1",

"name": "Example Project",

"slug": "example-project"

},

"metadata": {

"type": "ExampleError",

"value": "This is an example error"

},

"status": "unresolved",

"level": "error",

"firstSeen": "2023-10-01T12:00:00Z",

"lastSeen": "2023-10-01T12:05:00Z",

"count": 5,

"userCount": 3

}

},

"installation": {

"uuid": "installation-uuid"

},

"actor": {

"type": "user",

"id": "789",

"name": "John Doe"

}

}'

Result:

Development Custom Templates

Docker

Create a configuration file:

mkdir -p ./config && touch config.yaml

config.yaml:

name: versus

host: 0.0.0.0

port: 3000

alert:

slack:

enable: true

token: ${SLACK_TOKEN}

channel_id: ${SLACK_CHANNEL_ID}

template_path: "/app/config/slack_message.tmpl"

telegram:

enable: false

viber:

enable: false

msteams:

enable: false

Configuration Notes

Ensure template_path in config.yaml matches container path:

alert:

slack:

template_path: "/app/config/slack_message.tmpl" # For containerized env

Slack Template

Create your Slack message template, for example config/slack_message.tmpl:

🔥 *Critical Error in {{.ServiceName}}*

❌ Error Details:

```{{.Logs}}```

Owner <@{{.UserID}}> please investigate

Run with volume mount:

docker run -d \

-p 3000:3000 \

-v $(pwd)/config:/app/config \

-e SLACK_ENABLE=true \

-e SLACK_TOKEN=your_slack_token \

-e SLACK_CHANNEL_ID=your_channel_id \

--name versus \

ghcr.io/versuscontrol/versus-incident

To test, simply send an incident to Versus:

curl -X POST http://localhost:3000/api/incidents \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] This is an error log from User Service that we can obtain using Fluent Bit.",

"ServiceName": "order-service",

"UserID": "SLACK_USER_ID"

}'

Response:

{

"status":"Incident created"

}

Result:

Understanding Custom Templates with Monitoring Webhooks

When integrating Versus with any monitoring tool that supports webhooks, you need to understand the JSON payload structure that the tool sends to create an effective template. Here's a step-by-step guide:

- Enable Debug Mode: First, enable debug_body in your config to see the exact payload structure:

alert:

debug_body: true # This will print the incoming payload to the console

-

Capture Sample Payload: Send a test alert to Versus, then review the JSON structure within the logs of your Versus instance.

-

Create Custom Template: Use the JSON structure to build a template that extracts the relevant information.

FluentBit Integration Example

Here's a sample FluentBit configuration to send logs to Versus:

[OUTPUT]

Name http

Match kube.production.user-service.*

Host versus-host

Port 3000

URI /api/incidents

Format json

Header Content-Type application/json

Retry_Limit 3

Sample FluentBit JSON Payload:

{

"date": 1746354647.987654321,

"log": "ERROR: Exception occurred while handling request ID: req-55ef8801\nTraceback (most recent call last):\n File \"/app/server.py\", line 215, in handle_request\n user_id = session['user_id']\nKeyError: 'user_id'\n",

"stream": "stderr",

"time": "2025-05-04T17:30:47.987654321Z",

"kubernetes": {

"pod_name": "user-service-6cc8d5f7b5-wxyz9",

"namespace_name": "production",

"pod_id": "f0e9d8c7-b6a5-f4e3-d2c1-b0a9f8e7d6c5",

"labels": {

"app": "user-service",

"tier": "backend",

"environment": "production"

},

"annotations": {

"kubernetes.io/psp": "eks.restricted",

"monitoring.alpha.example.com/scrape": "true"

},

"host": "ip-10-1-2-4.ap-southeast-1.compute.internal",

"container_name": "auth-logic-container",

"docker_id": "f5e4d3c2b1a0f5e4d3c2b1a0f5e4d3c2b1a0f5e4d3c2b1a0f5e4d3c2b1a0f5e4",

"container_hash": "my-docker-hub/user-service@sha256:abcdef1234567890abcdef1234567890abcdef1234567890abcdef1234567890",

"container_image": "my-docker-hub/user-service:v2.1.0"

}

}

FluentBit Slack Template (config/slack_message.tmpl):

🚨 *Error in {{.kubernetes.labels.app}}* 🚨

*Environment:* {{.kubernetes.labels.environment}}

*Pod:* {{.kubernetes.pod_name}}

*Container:* {{.kubernetes.container_name}}

*Error Details:*

```{{.log}}```

*Time:* {{.time}}

*Host:* {{.kubernetes.host}}

<@SLACK_ONCALL_USER_ID> Please investigate!

Other Templates

Telegram Template

For Telegram, you can use HTML formatting. Create your Telegram message template, for example config/telegram_message.tmpl:

🚨 <b>Critical Error Detected!</b> 🚨

📌 <b>Service:</b> {{.ServiceName}}

⚠️ <b>Error Details:</b>

{{.Logs}}

This template will be parsed with HTML tags when sending the alert to Telegram.

Email Template

Create your email message template, for example config/email_message.tmpl:

Subject: Critical Error Alert - {{.ServiceName}}

Critical Error Detected in {{.ServiceName}}

----------------------------------------

Error Details:

{{.Logs}}

Please investigate this issue immediately.

Best regards,

Versus Incident Management System

This template supports both plain text and HTML formatting for email notifications.

Microsoft Teams Template

Create your Teams message template, for example config/msteams_message.tmpl:

**Critical Error in {{.ServiceName}}**

**Error Details:**

```{{.Logs}}```

Please investigate immediately

SNS Usage

docker run -d \

-p 3000:3000 \

-e SLACK_ENABLE=true \

-e SLACK_TOKEN=your_slack_token \

-e SLACK_CHANNEL_ID=your_channel_id \

-e SNS_ENABLE=true \

-e SNS_TOPIC_ARN=$SNS_TOPIC_ARN \

-e SNS_HTTPS_ENDPOINT_SUBSCRIPTION=https://your-domain.com \

-e AWS_ACCESS_KEY_ID=$AWS_ACCESS_KEY \

-e AWS_SECRET_ACCESS_KEY=$AWS_SECRET_KEY \

--name versus \

ghcr.io/versuscontrol/versus-incident

Send test message using AWS CLI:

aws sns publish \

--topic-arn $SNS_TOPIC_ARN \

--message '{"ServiceName":"test-service","Logs":"[ERROR] Test error","UserID":"U12345"}' \

--region $AWS_REGION

A key real-world application of Amazon SNS involves integrating it with CloudWatch Alarms. This allows CloudWatch to publish messages to an SNS topic when an alarm state changes (e.g., from OK to ALARM), which can then trigger notifications to Slack, Telegram, or Email via Versus Incident with a custom template.

Next steps

- Admin Dashboard — what the UI surfaces and how to rebuild the bundled assets.

- Configuration — every config key, env var, and per-request query parameter.

- Advanced Template Tips - learn how to write template

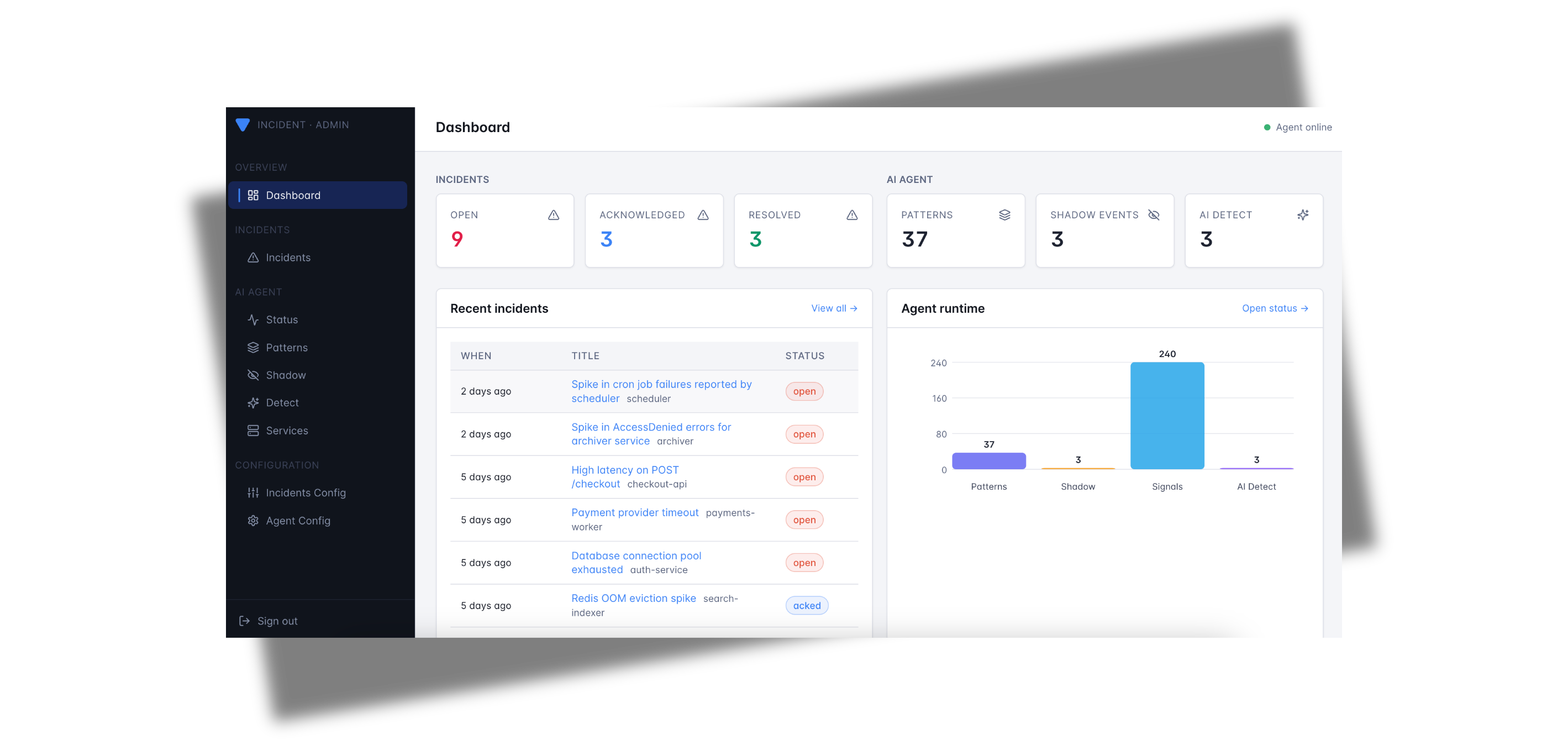

Admin Dashboard

Versus Incident ships with a built-in admin dashboard — a single-page React app embedded directly into the Go binary. There is no separate UI process to run; once the server is up, the dashboard is available at the root path.

Quick start

docker run -p 3000:3000 \

-e GATEWAY_SECRET=change-me \

-e SLACK_ENABLE=true \

-e SLACK_TOKEN=$SLACK_TOKEN \

-e SLACK_CHANNEL_ID=$SLACK_CHANNEL_ID \

ghcr.io/versuscontrol/versus-incident

Then open http://localhost:3000/ in your browser.

Public URL. When running behind a reverse proxy or in Kubernetes, set

public_host(e.g.public_host: https://versus.example.com) inconfig.yamlso the startup banner and acknowledgement links use the externally-reachable address. Withpublic_hostempty, Versus falls back tohttp://<host>:<port>.

GATEWAY_SECRETis required for the dashboard to function. All admin endpoints (/api/admin/*and/api/agent/*) are gated by theX-Gateway-SecretHTTP header. The dashboard prompts you for this value the first time you load it and stores it in your browser'slocalStorage. With no secret configured the admin endpoints are not registered at all.

What you can do

The dashboard surfaces every persisted incident plus, when the AI agent is enabled, the full agent-runtime state. It is meant for day-to-day operations: triaging fresh alerts, acknowledging on-call pages, and curating the agent's pattern catalog.

Pages

| Page | Path | What it shows |

|---|---|---|

| Dashboard | /dashboard | At-a-glance metrics + Agent runtime bar chart, recent incidents, top patterns, recent shadow events. |

| Incidents | /incidents | Full incident history (newest first) with filters for open / acked / resolved and a free-text search. |

| Incident detail | /incidents/:id | Single incident: title, service, channels notified, on-call status, notify outcome, raw payload. |

| Agent status | /status | Worker mode, source count, catalog size, dirty flag. |

| Patterns | /patterns | Every pattern the miner has learned (count, verdict, service, rule, last seen). |

| Pattern detail | /patterns/:id | One pattern: full template, sample message, edit verdict / tags, delete. |

| Shadow | /shadow | NDJSON log of "would-have-alerted" events recorded in shadow mode. |

| Shadow detail | /shadow/:patternId | Drill into one shadow event with the matching catalog entry side-by-side. |

| Services | /services | Every service the agent has discovered, with first-seen timestamps and grace controls. |

Incident lifecycle

Every incident received via POST /api/incidents (or the SNS / SQS

listeners) is persisted to the configured storage backend immediately —

before the alert fan-out — so a downstream channel failure never

loses the record. Each incident carries:

notify_status—pending,sent, orfailed(withnotify_erroron failure). Visible as a coloured pill in the incidents table.acked_at— set when an operator clicks the acknowledge button in Slack/Telegram or hitsGET /api/ack/:incidentID. The dashboard reflects the new state on the next poll.resolved— true when the original payload'sstatus/state/alertStatefield equals"resolved". Resolved alerts skip on-call escalation and theAckURLinjection.

Agent management

When agent.enable: true, the dashboard exposes the agent's full admin

surface without you needing curl:

- Browse the pattern catalog and assign verdicts (

known,spike, custom) or tags so detect-mode emissions stay quiet for the things you've already triaged. - Inspect every shadow event — one click takes you from the recent feed to a detail page that shows the exact log line, the cluster template, and the catalog entry it would have matched.

- Force the worker to flush the catalog or shadow log to disk for

immediate persistence (the worker also flushes periodically — see

agent.catalog.persist_interval). - See services the agent has discovered; end or restart a service's grace period without restarting the binary.

Where the data lives

Everything the dashboard reads is durable. The default backend writes JSON to a directory on disk:

storage:

type: file # file | redis | database (env: STORAGE_TYPE)

file:

data_dir: ./data

max_incidents: 1000 # rolling cap on persisted incidents

Files inside data_dir:

| File | Purpose |

|---|---|

incidents.json | All persisted incidents (most recent max_incidents). |

patterns.json | The agent's pattern catalog and the services map. |

shadow.json | Append-only NDJSON log of shadow events. |

Heads-up. Setting

storage.type: redisordatabaseis currently a config stub — the provider returnsstorage: backend not implemented. Stick withfile(the default) in production until these land.

Running without the UI

If you only need the API surface (for example, in a tightly-scoped CI

fixture), simply leave GATEWAY_SECRET unset. The admin endpoints stay

unregistered and the root path serves a small "UI not built" landing

page that links to /api/incidents and /healthz. The notification

fan-out is unaffected.

Template Syntax Guide

This document explains the template syntax (Go template syntax) used for create a custom alert template in Versus Incident.

Table of Contents

Basic Syntax

Access Data

Access data fields using double curly braces and dot notation, for example, with the data:

{

"Logs": "[ERROR] This is an error log from User Service that we can obtain using Fluent Bit.",

"ServiceName": "order-service",

}

Example template:

*Error in {{ .ServiceName }}*

{{ .Logs }}

Variables

You can declare variables within a template using the {{ $variable := value }} syntax. Once declared, variables can be used throughout the template, for example:

{{ $owner := "Team Alpha" }}

Owner: {{ $owner }}

Output:

Owner: Team Alpha

Pipelines

Pipelines allow you to chain together multiple actions or functions. The result of one action can be passed as input to another, for example:

upper: Converts a string to uppercase.

*{{ .ServiceName | upper }} Failure*

lower: Converts a string to lowercase.

*{{ .ServiceName | lower }} Failure*

title: Converts a string to title case (first letter of each word capitalized).

*{{ .ServiceName | title }} Failure*

default: Provides a default value if the input is empty.

*{{ .ServiceName | default "unknown-service" }} Failure*

slice: Extracts a sub-slice from a slice or string.

{{ .Logs | slice 0 50 }} // First 50 characters

replace: Replaces occurrences of a substring.

{{ .Logs | replace "error" "issue" }}

trimPrefix: Trims a prefix from a string.

{{ .Logs | trimPrefix "prod-" }}

trimSuffix: Trims a suffix from a string.

{{ .Logs | trimSuffix "-service" }}

len: Returns the length

{{ .Logs | len }} // Length of the message

urlquery: Escapes a string for use in a URL query.

uri /search?q={{ .Query | urlquery }}

split: splits a string into array using a separator.

{{ $parts := split "apple,banana,cherry" "," }}

{{/* Iterate over split results */}}

{{ range $parts }}

{{ . }}

{{ end }}

You can chain multiple pipes together:

{{ .Logs | trim | lower | truncate 50 }}

Control Structures

Conditionals

The templates support conditional logic using if, else, and end keywords.

{{ if .IsCritical }}

🚨 CRITICAL ALERT 🚨

{{ else }}

⚠️ Warning Alert ⚠️

{{ end }}

and:

{{ and .Value1 .Value2 .Value3 }}

or:

{{ or .Value1 .Value2 "default" }}

Best Practices

Error Handling:

{{ If .Error }}

{{ .Details }}

{{ else }}

No error details

{{ end }}

Whitespace Control:

{{- if .Production }} // Remove preceding whitespace

PROD ALERT{{ end -}} // Remove trailing whitespace

Template Comments:

{{/* This is a hidden comment */}}

Negates a boolean value:

{{ if not .IsCritical }}

This is not a critical issue.

{{ end }}

Checks if two values are equal:

{{ if eq .Status "critical" }}

🚨 Critical Alert 🚨

{{ end }}

Checks if two values are not equal:

{{ if ne .Env "production" }}

This is not a production environment.

{{ end }}

Returns the length of a string, slice, array, or map:

{{ if gt (len .Errors) 0 }}

There are {{ len .Errors }} errors.

{{ end }}

Checks if a string has a specific prefix:

{{ if .ServiceName | hasPrefix "prod-" }}

Production service!

{{ end }}

Checks if a string has a specific suffix:

{{ if .ServiceName | hasSuffix "-service" }}

This is a service.

{{ end }}

Checks if a message contains a specific strings:

{{ if contains .Logs "error" }}

The message contains error logs.

{{ else }}

The message does NOT contain error.

{{ end }}

Loops

Iterate over slices/arrays with range:

{{ range .ErrorStack }}

- {{ . }}

{{ end }}

Microsoft Teams Templates

Microsoft Teams templates support Markdown syntax, which is automatically converted to Adaptive Cards when sent to Teams. As of April 2025 (with the retirement of Office 365 Connectors), all Microsoft Teams integrations use Power Automate Workflows.

Supported Markdown Features

Your template can include:

- Headings: Use

#,##, or###for different heading levels - Bold Text: Wrap text with double asterisks (

**bold**) - Code Blocks: Use triple backticks to create code blocks

- Lists: Create unordered lists with

-or*, and ordered lists with numbers - Links: Use

[text](url)to create clickable links

Automatic Summary and Text Fields

Versus Incident now automatically handles two important fields for Microsoft Teams notifications:

- Summary: The system extracts a summary from your template's first heading (or first line if no heading exists) which appears in Teams notifications.

- Text: A plain text version of your message is automatically generated as a fallback for clients that don't support Adaptive Cards.

You don't need to add these fields manually - the system handles this for you to ensure proper display in Microsoft Teams.

Example Template

Here's a complete example for Microsoft Teams:

# Incident Alert: {{.ServiceName}}

### Error Information

**Time**: {{.Timestamp}}

**Severity**: {{.Severity}}

## Error Details

```{{.Logs}}```

## Action Required

1. Check system status

2. Review logs in monitoring dashboard

3. Escalate to on-call if needed

[View Details](https://your-dashboard/incidents/{{.IncidentID}})

This will be converted to an Adaptive Card with proper formatting in Microsoft Teams, with headings, code blocks, formatted lists, and clickable links.

Configuration

Table of Contents

- Sample Configuration File

- Environment Variables

- Dynamic Configuration with Query Parameters

- SNS Listener

- AI Agent

- On-Call

A sample configuration file is located at config/config.yaml:

name: versus

host: 0.0.0.0

port: 3000

public_host: https://your-ack-host.example # Required for on-call ack & dashboard links

# Shared secret required for ALL admin endpoints (`/api/admin/*` and

# `/api/agent/*`) and the embedded admin dashboard. Sent by clients

# (and the dashboard) in the `X-Gateway-Secret` header. When empty,

# admin endpoints are not registered and the agent refuses to start.

gateway_secret: ${GATEWAY_SECRET}

# Storage backend used by BOTH the agent (catalog, shadow log, services)

# and the incident service (history shown in the UI). Only `file` is

# implemented today; `redis` and `database` are config stubs.

storage:

type: file # file | redis | database (env: STORAGE_TYPE)

file:

data_dir: ./data

max_incidents: 1000 # rolling cap on persisted incidents

# Optional global proxy applied per-channel via `use_proxy: true` below

# (Telegram, Viber, Lark). Unset to disable.

proxy:

url: ${PROXY_URL} # HTTP/HTTPS/SOCKS5, e.g. http://proxy.example.com:8080

username: ${PROXY_USERNAME}

password: ${PROXY_PASSWORD}

alert:

debug_body: true # Default value, will be overridden by DEBUG_BODY env var

slack:

enable: false # Default value, will be overridden by SLACK_ENABLE env var

token: ${SLACK_TOKEN} # From environment

channel_id: ${SLACK_CHANNEL_ID} # From environment

template_path: "config/slack_message.tmpl"

message_properties:

button_text: "Acknowledge Alert" # Custom text for the acknowledgment button

button_style: "primary" # Button style: "primary" (default blue), "danger" (red), or empty for default gray

disable_button: false # Set to true to disable the button, if you want to handle acknowledgment differently

telegram:

enable: false # Default value, will be overridden by TELEGRAM_ENABLE env var

bot_token: ${TELEGRAM_BOT_TOKEN} # From environment

chat_id: ${TELEGRAM_CHAT_ID} # From environment

template_path: "config/telegram_message.tmpl"

use_proxy: false # Set to true to use the global proxy block above

viber:

enable: false # Default value, will be overridden by VIBER_ENABLE env var

api_type: ${VIBER_API_TYPE} # From environment - "channel" (default) or "bot"

bot_token: ${VIBER_BOT_TOKEN} # From environment (token for bot or channel)

# Channel API (recommended for incident management)

channel_id: ${VIBER_CHANNEL_ID} # From environment (required for channel API)

# Bot API (for individual user notifications)

user_id: ${VIBER_USER_ID} # From environment (required for bot API)

template_path: "config/viber_message.tmpl"

use_proxy: false

email:

enable: false # Default value, will be overridden by EMAIL_ENABLE env var

smtp_host: ${SMTP_HOST} # From environment

smtp_port: ${SMTP_PORT} # From environment

username: ${SMTP_USERNAME} # From environment

password: ${SMTP_PASSWORD} # From environment

to: ${EMAIL_TO} # From environment

subject: ${EMAIL_SUBJECT} # From environment

template_path: "config/email_message.tmpl"

msteams:

enable: false # Default value, will be overridden by MSTEAMS_ENABLE env var

power_automate_url: ${MSTEAMS_POWER_AUTOMATE_URL} # Power Automate HTTP trigger URL (required)

template_path: "config/msteams_message.tmpl"

other_power_urls: # Optional: Define additional Power Automate URLs for multiple MS Teams channels

qc: ${MSTEAMS_OTHER_POWER_URL_QC} # Power Automate URL for QC team

ops: ${MSTEAMS_OTHER_POWER_URL_OPS} # Power Automate URL for Ops team

dev: ${MSTEAMS_OTHER_POWER_URL_DEV} # Power Automate URL for Dev team

lark:

enable: false # Default value, will be overridden by LARK_ENABLE env var

webhook_url: ${LARK_WEBHOOK_URL} # Lark webhook URL (required)

template_path: "config/lark_message.tmpl"

use_proxy: false

other_webhook_urls: # Optional: Enable overriding the default webhook URL using query parameters, eg /api/incidents?lark_other_webhook_url=dev

dev: ${LARK_OTHER_WEBHOOK_URL_DEV}

prod: ${LARK_OTHER_WEBHOOK_URL_PROD}

queue:

enable: true

debug_body: true

# AWS SNS

sns:

enable: false

https_endpoint_subscription_path: /sns # URI to receive SNS messages, e.g. ${host}:${port}/sns or ${https_endpoint_subscription}/sns

# Options If you want to automatically create an sns subscription

https_endpoint_subscription: ${SNS_HTTPS_ENDPOINT_SUBSCRIPTION} # If the user configures an HTTPS endpoint, then an SNS subscription will be automatically created, e.g. https://your-domain.com

topic_arn: ${SNS_TOPIC_ARN}

# AWS SQS

sqs:

enable: false

queue_url: ${SQS_QUEUE_URL}

# GCP Pub Sub (config stub — not yet implemented)

pubsub:

enable: false

# Azure Service Bus (config stub — not yet implemented)

azbus:

enable: false

oncall:

### Enable overriding using query parameters

# /api/incidents?oncall_enable=false => Set to `true` or `false` to enable or disable on-call for a specific alert

# /api/incidents?oncall_wait_minutes=0 => Set the number of minutes to wait for acknowledgment before triggering on-call. Set to `0` to trigger immediately

initialized_only: true # Initialize on-call feature but don't enable by default; use query param oncall_enable=true to enable for specific requests

enable: false # Use this to enable or disable on-call for all alerts

wait_minutes: 3 # If you set it to 0, it means there's no need to check for an acknowledgment, and the on-call will trigger immediately

provider: aws_incident_manager # Valid values: "aws_incident_manager" or "pagerduty"

aws_incident_manager: # Used when provider is "aws_incident_manager"

response_plan_arn: ${AWS_INCIDENT_MANAGER_RESPONSE_PLAN_ARN}

other_response_plan_arns: # Optional: Enable overriding the default response plan ARN using query parameters, eg /api/incidents?awsim_other_response_plan=prod

prod: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_PROD}

dev: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_DEV}

staging: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_STAGING}

pagerduty: # Used when provider is "pagerduty"

routing_key: ${PAGERDUTY_ROUTING_KEY} # Integration/Routing key for Events API v2 (REQUIRED)

other_routing_keys: # Optional: Enable overriding the default routing key using query parameters, eg /api/incidents?pagerduty_other_routing_key=infra

infra: ${PAGERDUTY_OTHER_ROUTING_KEY_INFRA}

app: ${PAGERDUTY_OTHER_ROUTING_KEY_APP}

db: ${PAGERDUTY_OTHER_ROUTING_KEY_DB}

redis: # Required for on-call functionality and the AI agent

insecure_skip_verify: true # dev only

host: ${REDIS_HOST}

port: ${REDIS_PORT}

password: ${REDIS_PASSWORD}

db: 0

# -----------------------------------------------------------------------------

# AI agent (training | shadow | detect) — opt-in.

# When agent.enable=false (the default) nothing extra runs.

# Source list lives in a separate file (sources_path).

# -----------------------------------------------------------------------------

agent:

enable: false # master switch (env: AGENT_ENABLE)

mode: training # training | shadow | detect (env: AGENT_MODE)

poll_interval: 30s # how often each source is pulled

lookback: 5m # initial backfill window on startup

batch_max: 5000 # safety cap per tick

signal_max_bytes: 65536 # cap on Signal.Raw

# Path to the YAML file listing log sources (resolved relative to this

# config file). Override via env: AGENT_SOURCES_PATH.

sources_path: ./agent_sources.yaml

# Grace period for newly discovered services in shadow/detect mode.

# During grace, signals are observed and clustered but never surfaced

# as would-have-alerted (shadow) or sent to the AI analyzer (detect).

# Set to "0" to disable.

new_service_grace: 30m

# Regexes used to extract a service name from each log message. The

# first capture group of the first matching pattern wins. Empty list

# disables service detection (everything attributed to "_unknown").

service_patterns:

- '(?i)\bservice[._-]?name["\s:=]+"?([A-Za-z0-9._-]+)'

- '(?i)\b(?:service|svc|app|component)\s*=\s*"?([A-Za-z0-9._-]+)'

- '(?i)"(?:service|svc|app|component)"\s*:\s*"([A-Za-z0-9._-]+)"'

- '\[([A-Za-z0-9._-]+)\]'

redaction:

enable: true

redact_ips: false # IPs are usually useful context; opt-in

extra_patterns:

- "(?i)password=\\S+"

- "Authorization:\\s*Bearer\\s+\\S+"

catalog:

persist_interval: 30s

auto_promote_after: 50 # in detect mode, this many sightings = "known"

# Spike detection: a known pattern is re-flagged when its tick-level

# frequency exceeds the EWMA baseline by `spike_multiplier`.

spike_multiplier: 5.0

spike_min_frequency: 5

spike_min_baseline_count: 20

miner:

similarity_threshold: 0.4

tree_depth: 4

max_children: 100

regex:

# Pre-filter: only signals matching at least one rule (named or

# default) are forwarded to the miner. Set to ".*" to train on

# every line, or leave empty to require an explicit named match.

default_pattern: "(?i).*error.*"

rules:

- name: oom-killer

pattern: "Out of memory: Killed process"

- name: panic

pattern: "(?i)panic:"

- name: 5xx-burst

pattern: "HTTP/[0-9.]+\\s+5\\d\\d"

# AI analyzer — used in detect mode to assess unknown/spiking patterns.

ai:

enable: false # master switch (env: AGENT_AI_ENABLE)

base_url: ${AGENT_AI_BASE_URL} # OpenAI-compatible chat/completions endpoint

api_key: ${AGENT_AI_API_KEY}

model: "gpt-4o-mini"

temperature: 0.2

max_tokens: 512

max_calls_per_hour: 60 # 0 = unlimited

cache_ttl: "1h"

The runtime list of agent sources lives in the file referenced by

agent.sources_path (default ./agent_sources.yaml):

sources:

- name: prod-app

type: elasticsearch

enable: true

elasticsearch:

addresses:

- https://es.example.internal:9200

username: ${ES_USERNAME}

password: ${ES_PASSWORD}

index: "logs-app-*"

time_field: "@timestamp"

query: 'log.level:(error OR warn)'

message_field: message

page_size: 500

- name: sample-app

type: file

enable: true

file:

path: ./local/resource/sample-app.log

format: text

from_beginning: true

Environment Variables

The application relies on several environment variables to configure alerting services. Below is an explanation of each variable:

Common

| Variable | Description |

|---|---|

DEBUG_BODY | Set to true to enable print body send to Versus Incident. |

Admin & Gateway

| Variable | Description |

|---|---|

GATEWAY_SECRET | Shared secret required to access the admin dashboard and every /api/admin/* and /api/agent/* endpoint. Sent by clients in the X-Gateway-Secret header. When unset the admin endpoints are not registered at all. |

Storage

| Variable | Description |

|---|---|

STORAGE_TYPE | Storage backend for incidents and agent state. One of file (default and the only implemented backend today), redis, database. |

STORAGE_FILE_DATA_DIR | Directory for the file backend. Default ./data. Files written: incidents.json, patterns.json, shadow.json. |

Slack Configuration

| Variable | Description |

|---|---|

SLACK_ENABLE | Set to true to enable Slack notifications. |

SLACK_TOKEN | The authentication token for your Slack bot. |

SLACK_CHANNEL_ID | The ID of the Slack channel where alerts will be sent. Can be overridden per request using the slack_channel_id query parameter. |

Slack also supports interactive acknowledgment buttons that can be configured using the following properties in the config.yaml file:

alert:

slack:

# ...other slack configuration...

message_properties:

button_text: "Acknowledge Alert" # Custom text for the acknowledgment button

button_style: "primary" # Button style: "primary" (default blue), "danger" (red), or empty for default gray

disable_button: false # Set to true to disable the button, if you want to handle acknowledgment differently

These properties allow you to:

- Customize the text of the acknowledgment button (

button_text) - Change the style of the button (

button_style) - options are "primary" (blue), "danger" (red), or leave empty for default gray - Disable the interactive button entirely (

disable_button) if you want to handle acknowledgment through other means

Telegram Configuration

| Variable | Description |

|---|---|

TELEGRAM_ENABLE | Set to true to enable Telegram notifications. |

TELEGRAM_BOT_TOKEN | The authentication token for your Telegram bot. |

TELEGRAM_CHAT_ID | The chat ID where alerts will be sent. Can be overridden per request using the telegram_chat_id query parameter. |

Viber Configuration

Viber supports two types of API integrations:

- Channel API (default): Send messages to Viber channels for team notifications

- Bot API: Send messages to individual users for personal notifications

When to use Channel API:

- ✅ Broadcasting to team channels

- ✅ Public incident notifications

- ✅ Automated system alerts

- ✅ Better for most incident management scenarios

- ✅ No individual user setup required

When to use Bot API:

- ✅ Personal notifications to specific users

- ✅ Direct messaging for individual alerts

- ⚠️ Limited to individual users only

- ⚠️ Requires users to interact with bot first

- ⚠️ User IDs can be hard to obtain

| Variable | Description |

|---|---|

VIBER_ENABLE | Set to true to enable Viber notifications. |

VIBER_BOT_TOKEN | The authentication token for your Viber bot or channel. |

VIBER_API_TYPE | API type: "channel" (default) for team notifications or "bot" for individual messaging. |

VIBER_CHANNEL_ID | The channel ID where alerts will be posted (required for channel API). Can be overridden per request using the viber_channel_id query parameter. |

VIBER_USER_ID | The user ID where alerts will be sent (required for bot API). Can be overridden per request using the viber_user_id query parameter. |

Email Configuration

| Variable | Description |

|---|---|

EMAIL_ENABLE | Set to true to enable email notifications. |

SMTP_HOST | The SMTP server hostname (e.g., smtp.gmail.com). |

SMTP_PORT | The SMTP server port (e.g., 587 for TLS). |

SMTP_USERNAME | The username/email for SMTP authentication. |

SMTP_PASSWORD | The password or app-specific password for SMTP authentication. |

EMAIL_TO | The recipient email address(es) for incident notifications. Can be multiple addresses separated by commas. Can be overridden per request using the email_to query parameter. |

EMAIL_SUBJECT | The subject line for email notifications. Can be overridden per request using the email_subject query parameter. |

Microsoft Teams Configuration

The Microsoft Teams integration now supports both legacy Office 365 webhooks and modern Power Automate workflows with a single configuration option:

alert:

msteams:

enable: true

power_automate_url: ${MSTEAMS_POWER_AUTOMATE_URL}

template_path: "config/msteams_message.tmpl"

Automatic URL Detection (April 2025 Update)

As of the April 2025 update, Versus Incident automatically detects the type of URL provided in the power_automate_url setting:

-

Legacy Office 365 Webhook URLs: If the URL contains "webhook.office.com" (e.g.,

https://yourcompany.webhook.office.com/...), the system will use the legacy format with a simple "text" field containing your rendered Markdown. -

Power Automate Workflow URLs: For newer Power Automate HTTP trigger URLs, the system converts your Markdown template to an Adaptive Card with rich formatting features.

This automatic detection provides backward compatibility while supporting newer features, eliminating the need for separate configuration options.

| Variable | Description |

|---|---|

MSTEAMS_ENABLE | Set to true to enable Microsoft Teams notifications. |

MSTEAMS_POWER_AUTOMATE_URL | The Power Automate HTTP trigger URL for your Teams channel. Automatically works with both Power Automate workflow URLs and legacy Office 365 webhooks. |

MSTEAMS_OTHER_POWER_URL_QC | (Optional) Power Automate URL for the QC team channel. Can be selected per request using the msteams_other_power_url=qc query parameter. |

MSTEAMS_OTHER_POWER_URL_OPS | (Optional) Power Automate URL for the Ops team channel. Can be selected per request using the msteams_other_power_url=ops query parameter. |

MSTEAMS_OTHER_POWER_URL_DEV | (Optional) Power Automate URL for the Dev team channel. Can be selected per request using the msteams_other_power_url=dev query parameter. |

Lark Configuration

| Variable | Description |

|---|---|

LARK_ENABLE | Set to true to enable Lark notifications. |

LARK_WEBHOOK_URL | The webhook URL for your Lark channel. |

LARK_OTHER_WEBHOOK_URL_DEV | (Optional) Webhook URL for the development team. Can be selected per request using the lark_other_webhook_url=dev query parameter. |

LARK_OTHER_WEBHOOK_URL_PROD | (Optional) Webhook URL for the production team. Can be selected per request using the lark_other_webhook_url=prod query parameter. |

Queue Services Configuration

| Variable | Description |

|---|---|

SNS_ENABLE | Set to true to enable receive Alert Messages from SNS. |

SNS_HTTPS_ENDPOINT_SUBSCRIPTION | This specifies the HTTPS endpoint to which SNS sends messages. When an HTTPS endpoint is configured, an SNS subscription is automatically created. If no endpoint is configured, you must create the SNS subscription manually using the CLI or AWS Console. E.g. https://your-domain.com. |

SNS_TOPIC_ARN | AWS ARN of the SNS topic to subscribe to. |

SQS_ENABLE | Set to true to enable receive Alert Messages from AWS SQS. |

SQS_QUEUE_URL | URL of the AWS SQS queue to receive messages from. |

On-Call Configuration

| Variable | Description |

|---|---|

ONCALL_ENABLE | Set to true to enable on-call functionality for all incidents by default. Can be overridden per request using the oncall_enable query parameter. |

ONCALL_INITIALIZED_ONLY | Set to true to initialize on-call feature but keep it disabled by default. When set to true, on-call is triggered only for requests that explicitly include ?oncall_enable=true in the URL. |

ONCALL_WAIT_MINUTES | Time in minutes to wait for acknowledgment before escalating (default: 3). Can be overridden per request using the oncall_wait_minutes query parameter. |

ONCALL_PROVIDER | Specify the on-call provider to use ("aws_incident_manager" or "pagerduty"). |

AWS_INCIDENT_MANAGER_RESPONSE_PLAN_ARN | The ARN of the AWS Incident Manager response plan to use for on-call escalations. Required if on-call provider is "aws_incident_manager". |

AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_PROD | (Optional) AWS Incident Manager response plan ARN for production environment. Can be selected per request using the awsim_other_response_plan=prod query parameter. |

AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_DEV | (Optional) AWS Incident Manager response plan ARN for development environment. Can be selected per request using the awsim_other_response_plan=dev query parameter. |

AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_STAGING | (Optional) AWS Incident Manager response plan ARN for staging environment. Can be selected per request using the awsim_other_response_plan=staging query parameter. |

PAGERDUTY_ROUTING_KEY | Integration/Routing key for PagerDuty Events API v2. Required if on-call provider is "pagerduty". |

PAGERDUTY_OTHER_ROUTING_KEY_INFRA | (Optional) PagerDuty routing key for feature team. Can be selected per request using the pagerduty_other_routing_key=infra query parameter. |

PAGERDUTY_OTHER_ROUTING_KEY_APP | (Optional) PagerDuty routing key for application team. Can be selected per request using the pagerduty_other_routing_key=app query parameter. |

PAGERDUTY_OTHER_ROUTING_KEY_DB | (Optional) PagerDuty routing key for database team. Can be selected per request using the pagerduty_other_routing_key=db query parameter. |

Enabling On-Call for Specific Incidents with initialized_only

When you have initialized_only: true in your configuration (rather than enable: true), on-call is only triggered for incidents that explicitly request it. This is useful when:

- You want the on-call feature ready but not active for all alerts

- You need to selectively enable on-call only for high-priority services or incidents

- You want to let your monitoring system decide which alerts should trigger on-call

Example configuration:

oncall:

enable: false

initialized_only: true # feature ready but not active by default

wait_minutes: 3

provider: aws_incident_manager

# ... provider configuration ...

With this configuration, on-call is only triggered when requested via query parameter:

# This alert will send notifications but NOT trigger on-call escalation

curl -X POST "http://localhost:3000/api/incidents" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[WARNING] Non-critical database latency increase.",

"ServiceName": "database-monitoring",

"UserID": "U12345"

}'

# This alert WILL trigger on-call escalation because of the query parameter

curl -X POST "http://localhost:3000/api/incidents?oncall_enable=true" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[CRITICAL] Production database is down.",

"ServiceName": "core-database",

"UserID": "U12345"

}'

Understanding On-Call Modes:

| Mode | Configuration | Behavior |

|---|---|---|

| Disabled | enable: falseinitialized_only: false | On-call feature is not initialized. No on-call functionality is available. |

| Always Enabled | enable: true | On-call is active for all incidents by default. Can be disabled per request with ?oncall_enable=false. |

| Opt-In Only | enable: falseinitialized_only: true | On-call feature is initialized but inactive by default. Must be explicitly enabled per request with ?oncall_enable=true. |

Redis Configuration

| Variable | Description |

|---|---|

REDIS_HOST | The hostname or IP address of the Redis server. Required if on-call is enabled. |

REDIS_PORT | The port number of the Redis server. Required if on-call is enabled. |

REDIS_PASSWORD | The password for authenticating with the Redis server. Required if on-call is enabled and Redis requires authentication. |

AI Agent Configuration

| Variable | Description |

|---|---|

AGENT_ENABLE | Set to true to start the AI agent worker. When false (default) no agent goroutines, files, or Redis keys are created. |

AGENT_MODE | Worker mode: training (observe and learn only), shadow (classify and log "would-have-alerted" events), or detect (classify + emit). |

AGENT_SOURCES_PATH | Path to the YAML file listing the agent's log sources. Resolved relative to the main config file. Default ./agent_sources.yaml. |

AGENT_NEW_SERVICE_GRACE | Duration a newly discovered service stays in implicit training before detect-mode AI analysis begins (e.g. 30m). 0 disables the grace window. |

AGENT_SERVICE_PATTERNS | Comma-separated list of regexes used to extract the service name from each log line. Each pattern must contain at least one capture group. Overrides the YAML list when set. |

AGENT_AI_ENABLE | Set to true to call the configured LLM in detect mode. When false, detect mode classifies but never calls the model (dry-run). |

AGENT_AI_BASE_URL | OpenAI-compatible chat/completions endpoint, e.g. https://api.openai.com/v1. |

AGENT_AI_API_KEY | Bearer token sent in the Authorization header when calling the LLM. |

AGENT_AI_MODEL | Model identifier, e.g. gpt-4o-mini. |

The agent also requires the root-level

GATEWAY_SECRET(see Admin & Gateway) and the root-levelredisblock — Redis is used to remember per-source cursors so the agent resumes from where it left off after a restart.

Ensure these environment variables are properly set before running the application.

Dynamic Configuration with Query Parameters

We provide a way to overwrite configuration values using query parameters, allowing you to send alerts to different channels and customize notification behavior on a per-request basis.

| Query Parameter | Description |

|---|---|

slack_channel_id | The ID of the Slack channel where alerts will be sent. Use: /api/incidents?slack_channel_id=<your_value>. |

telegram_chat_id | The chat ID where Telegram alerts will be sent. Use: /api/incidents?telegram_chat_id=<your_chat_id>. |

viber_channel_id | The channel ID where Viber alerts will be posted (for Channel API). Use: /api/incidents?viber_channel_id=<your_channel_id>. |

viber_user_id | The user ID where Viber alerts will be sent (for Bot API). Use: /api/incidents?viber_user_id=<your_user_id>. |

email_to | Overrides the default recipient email address for email notifications. Use: /api/incidents?email_to=<recipient_email>. |

email_subject | Overrides the default subject line for email notifications. Use: /api/incidents?email_subject=<custom_subject>. |

msteams_other_power_url | Overrides the default Microsoft Teams Power Automate flow by specifying an alternative key (e.g., qc, ops, dev). Use: /api/incidents?msteams_other_power_url=qc. |

lark_other_webhook_url | Overrides the default Lark webhook URL by specifying an alternative key (e.g., dev, prod). Use: /api/incidents?lark_other_webhook_url=dev. |

oncall_enable | Set to true or false to enable or disable on-call for a specific alert. Use: /api/incidents?oncall_enable=false. |

oncall_wait_minutes | Set the number of minutes to wait for acknowledgment before triggering on-call. Set to 0 to trigger immediately. Use: /api/incidents?oncall_wait_minutes=0. |

awsim_other_response_plan | Overrides the default AWS Incident Manager response plan ARN by specifying an alternative key (e.g., prod, dev, staging). Use: /api/incidents?awsim_other_response_plan=prod. |

pagerduty_other_routing_key | Overrides the default PagerDuty routing key by specifying an alternative key (e.g., infra, app, db). Use: /api/incidents?pagerduty_other_routing_key=infra. |

Examples for Each Query Parameter

Slack Channel Override

To send an alert to a specific Slack channel (e.g., a dedicated channel for database issues):

curl -X POST "http://localhost:3000/api/incidents?slack_channel_id=C01DB2ISSUES" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Database connection pool exhausted.",

"ServiceName": "database-service",

"UserID": "U12345"

}'

Telegram Chat Override

To send an alert to a different Telegram chat (e.g., for network monitoring):

curl -X POST "http://localhost:3000/api/incidents?telegram_chat_id=-1001234567890" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Network latency exceeding thresholds.",

"ServiceName": "network-monitor",

"UserID": "U12345"

}'

Viber Channel Override

To send an alert to a specific Viber channel (recommended for team notifications):

curl -X POST "http://localhost:3000/api/incidents?viber_channel_id=01234567890A=" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Mobile service experiencing high error rates.",

"ServiceName": "mobile-api",

"UserID": "U12345"

}'

Viber User Override

To send an alert to a specific Viber user (for individual notifications):

curl -X POST "http://localhost:3000/api/incidents?viber_user_id=01234567890A=" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Personal alert for mobile service issue.",

"ServiceName": "mobile-api",

"UserID": "U12345"

}'

Email Recipient Override

To send an email alert to a specific recipient with a custom subject:

curl -X POST "http://localhost:3000/api/incidents?email_to=network-team@yourdomain.com&email_subject=Urgent%20Network%20Issue" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Load balancer failing health checks.",

"ServiceName": "load-balancer",

"UserID": "U12345"

}'

Microsoft Teams Channel Override

You can configure multiple Microsoft Teams channels using the other_power_urls setting:

alert:

msteams:

enable: true

power_automate_url: ${MSTEAMS_POWER_AUTOMATE_URL}

template_path: "config/msteams_message.tmpl"

other_power_urls:

qc: ${MSTEAMS_OTHER_POWER_URL_QC}

ops: ${MSTEAMS_OTHER_POWER_URL_OPS}

dev: ${MSTEAMS_OTHER_POWER_URL_DEV}

Then, to send an alert to the QC team's Microsoft Teams channel:

curl -X POST "http://localhost:3000/api/incidents?msteams_other_power_url=qc" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Quality check failed for latest deployment.",

"ServiceName": "quality-service",

"UserID": "U12345"

}'

Lark Webhook Override

You can configure multiple Lark webhook URLs using the other_webhook_urls setting:

alert:

lark:

enable: true

webhook_url: ${LARK_WEBHOOK_URL}

template_path: "config/lark_message.tmpl"

other_webhook_urls:

dev: ${LARK_OTHER_WEBHOOK_URL_DEV}

prod: ${LARK_OTHER_WEBHOOK_URL_PROD}

Then, to send an alert to the development team's Lark channel:

curl -X POST "http://localhost:3000/api/incidents?lark_other_webhook_url=dev" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Development server crash detected.",

"ServiceName": "dev-server",

"UserID": "U12345"

}'

On-Call Controls

To disable on-call escalation for a non-critical alert:

curl -X POST "http://localhost:3000/api/incidents?oncall_enable=false" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[WARNING] This is a minor issue that doesn't require on-call response.",

"ServiceName": "monitoring-service",

"UserID": "U12345"

}'

To trigger on-call immediately without the normal wait period for a critical issue:

curl -X POST "http://localhost:3000/api/incidents?oncall_wait_minutes=0" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[CRITICAL] Payment processing system down.",

"ServiceName": "payment-service",

"UserID": "U12345"

}'

AWS Incident Manager Response Plan Override

You can configure multiple AWS Incident Manager response plans using the other_response_plan_arns setting:

oncall:

enable: true

wait_minutes: 3

provider: aws_incident_manager

aws_incident_manager:

response_plan_arn: ${AWS_INCIDENT_MANAGER_RESPONSE_PLAN_ARN} # Default response plan

other_response_plan_arns:

prod: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_PROD} # Production environment

dev: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_DEV} # Development environment

staging: ${AWS_INCIDENT_MANAGER_OTHER_RESPONSE_PLAN_ARN_STAGING} # Staging environment

Then, to use a specific AWS Incident Manager response plan for a production environment issue:

curl -X POST "http://localhost:3000/api/incidents?awsim_other_response_plan=prod" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[CRITICAL] Production database cluster failure.",

"ServiceName": "prod-database",

"UserID": "U12345"

}'

PagerDuty Routing Key Override

You can configure multiple PagerDuty routing keys using the other_routing_keys setting:

oncall:

enable: true

wait_minutes: 3

provider: pagerduty

pagerduty:

routing_key: ${PAGERDUTY_ROUTING_KEY} # Default routing key

other_routing_keys:

infra: ${PAGERDUTY_OTHER_ROUTING_KEY_INFRA} # Infrastructure team

app: ${PAGERDUTY_OTHER_ROUTING_KEY_APP} # Application team

db: ${PAGERDUTY_OTHER_ROUTING_KEY_DB} # Database team

Then, to use a specific PagerDuty routing key for the infrastructure team:

curl -X POST "http://localhost:3000/api/incidents?pagerduty_other_routing_key=infra" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[ERROR] Server load balancer failure in us-west-2.",

"ServiceName": "infrastructure",

"UserID": "U12345"

}'

Combining Multiple Parameters

You can combine multiple query parameters to customize exactly how an incident is handled:

curl -X POST "http://localhost:3000/api/incidents?slack_channel_id=C01PROD&telegram_chat_id=-987654321&oncall_enable=true&oncall_wait_minutes=1" \

-H "Content-Type: application/json" \

-d '{

"Logs": "[CRITICAL] Multiple service failures detected in production environment.",

"ServiceName": "core-infrastructure",

"UserID": "U12345",

"Severity": "CRITICAL"

}'

This will:

- Send the alert to a specific Slack channel (

C01PROD) - Send the alert to a specific Telegram chat (

-987654321) - Enable on-call escalation with a shortened 1-minute wait time

SNS Listener

Versus can subscribe to an SNS topic and treat each message as an incoming incident. This is useful for CloudWatch Alarms which can publish to SNS on state changes.

docker run -d \

-p 3000:3000 \

-e SLACK_ENABLE=true \

-e SLACK_TOKEN=your_slack_token \

-e SLACK_CHANNEL_ID=your_channel_id \

-e SNS_ENABLE=true \

-e SNS_TOPIC_ARN=$SNS_TOPIC_ARN \

-e SNS_HTTPS_ENDPOINT_SUBSCRIPTION=https://your-domain.com \

-e AWS_ACCESS_KEY_ID=$AWS_ACCESS_KEY \

-e AWS_SECRET_ACCESS_KEY=$AWS_SECRET_KEY \

--name versus \

ghcr.io/versuscontrol/versus-incident

Test with the AWS CLI:

aws sns publish \

--topic-arn $SNS_TOPIC_ARN \

--message '{"ServiceName":"test-service","Logs":"[ERROR] Test error","UserID":"U12345"}' \

--region $AWS_REGION

A common real-world setup: CloudWatch Alarms → SNS topic → Versus → Slack/Telegram/Email with a custom CloudWatch-aware template.

AI Agent

Versus supports an opt-in AI SRE agent that reads your logs, metrics, and traces, learns what normal looks like, and only alerts you when something new and unexpected appears.

Configuration example with agent features:

name: versus

host: 0.0.0.0

port: 3000

# ... existing alert configurations ...

# Shared secret required for ALL admin endpoints (`/api/admin/*` and

# `/api/agent/*`). Sent by clients in the `X-Gateway-Secret` header.

gateway_secret: ${GATEWAY_SECRET}

# Storage backend for the pattern catalog, shadow log, and incident

# history. Only `file` is implemented today; `redis` and `database`

# are config stubs.

storage:

type: file # file | redis | database (env: STORAGE_TYPE)

file:

data_dir: ./data

max_incidents: 1000 # rolling cap on persisted incidents

agent:

enable: false # Use this to enable or disable the agent for all sources

mode: training # Valid values: "training", "shadow", or "detect"

poll_interval: 30s

# Sources are kept in a separate file so they can be managed independently

# (e.g. swap fixtures, per-environment lists). Path is resolved relative to

# this config file. Override via env: AGENT_SOURCES_PATH.

sources_path: ./agent_sources.yaml

catalog:

persist_interval: 30s

auto_promote_after: 100 # In detect mode, this many sightings = "known"

redaction:

enable: true

redact_ips: false

extra_patterns: # Optional: extra regex rules to scrub before clustering

- "(?i)password=\\S+"

- "Authorization:\\s*Bearer\\s+\\S+"

miner:

similarity_threshold: 0.4

tree_depth: 4

max_children: 100

regex:

# Optional: tag any signal whose message matches this pattern

# if none of the named rules below hit. Leave empty to disable.

default_pattern: "(?i)error|exception|fatal|panic"

# Named rules are tried first, in order. The first match wins.

rules:

- name: oom

pattern: "(?i)out of memory|OOMKilled|java\\.lang\\.OutOfMemoryError"

- name: db-timeout

pattern: "(?i)(connection|query) timeout|deadlock detected"

- name: auth-failure

pattern: "(?i)401 unauthorized|invalid credentials|permission denied"

redis: # Required for the agent to persist source cursors across restarts

host: ${REDIS_HOST}

port: ${REDIS_PORT}

password: ${REDIS_PASSWORD}

db: 0

Explanation:

The agent section includes:

enable: Turn the agent on or off (default:false). When disabled, nothing extra runs.mode: How the agent behaves after it has learned your log patterns:training: observation only — the agent learns patterns and saves them, but sends no alerts.shadow: same as training, but also logs a note every time it would have sent an alert. Good for reviewing before going live.detect: the agent actively sends alerts for any pattern it has never seen before.

poll_interval: How often the agent checks your log sources for new entries.catalog: Where the agent stores the list of known patterns and how often to write updates. Storage is selected by the rootstorage:block.

Admin secret. All admin endpoints (

/api/admin/*and/api/agent/*) are protected by the root-levelgateway_secret(envGATEWAY_SECRET). Set it to any value you choose; clients send the same value in theX-Gateway-Secretheader. When no secret is configured the admin endpoints are not registered and the agent refuses to start.

-

redaction: Rules for automatically removing sensitive information (passwords, tokens, emails, etc.) from logs before the agent processes them. -

miner: Controls how aggressively the agent groups similar log lines together. The defaults work well for most setups. -

regex: Acts as a pre-filter for the agent. Only signals whose message matches at least one rule (a named entry underrulesordefault_pattern) are forwarded to the pattern miner and stored in the catalog.- Named

rulesare tried in order; the first match wins and tags the signal with thatname(stored asrule_nameon the pattern). - If no named rule hits,

default_patternis tried. Matches there are tagged withname=default. - To learn from every line, set

default_pattern: ".*". - To filter aggressively, set

default_pattern: ""(empty) and rely on your named rules.

- Named

-

sources_path: Path to a separate YAML file that lists the log sources the agent should read from. Resolved relative to the main config file. Override viaAGENT_SOURCES_PATH.

The sources file (default ./agent_sources.yaml) has a single top-level sources: list. Each entry needs name, type (file or elasticsearch), enable, plus a matching file: or elasticsearch: block:

sources:

- name: prod-app

type: elasticsearch

enable: true

elasticsearch:

addresses:

- https://es.example.internal:9200

username: ${ES_USERNAME}

password: ${ES_PASSWORD}

index: "logs-app-*"

time_field: "@timestamp"

query: 'log.level:(error OR warn)'

message_field: message

page_size: 500

- name: sample-app

type: file

enable: true

file:

path: ./local/resource/sample-app.log

format: text

from_beginning: true

The redis section is required when agent.enable is true. Redis stores the per-source cursor so the agent picks up where it left off after a restart.

For full integration walkthroughs see Enable AI Agent.

On-Call

Versus supports On-Call integrations with AWS Incident Manager and PagerDuty. Configuration example with on-call features:

name: versus

host: 0.0.0.0

port: 3000

public_host: https://your-ack-host.example # Required for on-call ack

# ... existing alert configurations ...

oncall:

### Enable overriding using query parameters

# /api/incidents?oncall_enable=false => Set to `true` or `false` to enable or disable on-call for a specific alert

# /api/incidents?oncall_wait_minutes=0 => Set the number of minutes to wait for acknowledgment before triggering on-call. Set to `0` to trigger immediately

enable: false

wait_minutes: 3 # If you set it to 0, on-call triggers immediately without checking for an acknowledgment

aws_incident_manager:

response_plan_arn: ${AWS_INCIDENT_MANAGER_RESPONSE_PLAN_ARN}

redis: # Required for on-call functionality

insecure_skip_verify: true # dev only

host: ${REDIS_HOST}

port: ${REDIS_PORT}

password: ${REDIS_PASSWORD}

db: 0

The oncall section includes:

enable: A boolean to toggle on-call functionality for all incidents (default:false).initialized_only: Initialize the on-call subsystem but keep it disabled by default. Withtrue, on-call is triggered only for requests that explicitly include?oncall_enable=true.wait_minutes: Time in minutes to wait for an acknowledgment before escalating (default:3). Set to0to trigger immediately.provider: Which on-call provider to use ("aws_incident_manager"or"pagerduty").aws_incident_manager: Configuration for AWS Incident Manager when selected, includingresponse_plan_arnandother_response_plan_arns.pagerduty: Configuration for PagerDuty when selected, includingrouting_keyandother_routing_keys.

The redis section is required when oncall.enable or oncall.initialized_only is true. It stores the open-incident state needed for ack-or-escalate.

For provider-specific walkthroughs see On-Call setup with Versus.

Deploy on Kubernetes

This page covers running Versus Incident as plain Kubernetes manifests. For the packaged distribution see Helm Chart.

TL;DR for production: mount a

PersistentVolumeClaimat/app/dataand setGATEWAY_SECRET. Without those two, the admin dashboard is unavailable and incident history disappears on every pod restart.

Quick deploy

1. Create the secrets

kubectl create secret generic versus-secrets \

--from-literal=gateway_secret=$GATEWAY_SECRET \

--from-literal=slack_token=$SLACK_TOKEN \

--from-literal=slack_channel_id=$SLACK_CHANNEL_ID

2. ConfigMap with config

# versus-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: versus-config

data:

config.yaml: |

name: versus

host: 0.0.0.0

port: 3000

public_host: https://versus.example.com # external URL the dashboard uses

gateway_secret: ${GATEWAY_SECRET}

storage:

type: file

file:

data_dir: /app/data # mount a PVC here (see below)

max_incidents: 1000

alert:

slack:

enable: true

token: ${SLACK_TOKEN}

channel_id: ${SLACK_CHANNEL_ID}

kubectl apply -f versus-config.yaml

Using custom templates

If the default formatting doesn't suit your needs you can override any template by mounting your own file. Create a ConfigMap with your custom template(s) and mount them into the container:

# versus-custom-templates.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: versus-custom-templates

data:

slack_message.tmpl: |

*🚨 Incident in {{.ServiceName}}*

----------

*Severity:* {{.Severity}}

*Environment:* {{.Environment}}

```{{.Logs}}```

Owner <@{{.UserID}}> please investigate

telegram_message.tmpl: |

🚨 <b>Incident in {{.ServiceName}}</b>

<b>Severity:</b> {{.Severity}}

<b>Environment:</b> {{.Environment}}

<pre>{{.Logs}}</pre>

Owner please investigate

kubectl apply -f versus-custom-templates.yaml

Then update the Deployment to mount the custom templates and point the config at them:

# In the container spec:

volumeMounts:

- name: versus-config

mountPath: /app/config/config.yaml

subPath: config.yaml

- name: custom-templates

mountPath: /app/custom/slack_message.tmpl

subPath: slack_message.tmpl

- name: custom-templates

mountPath: /app/custom/telegram_message.tmpl

subPath: telegram_message.tmpl

- name: versus-data

mountPath: /app/data

# In the volumes section:

volumes:

- name: versus-config

configMap:

name: versus-config

- name: custom-templates

configMap:

name: versus-custom-templates

- name: versus-data

persistentVolumeClaim:

claimName: versus-data

And reference the mounted paths in config.yaml:

alert:

slack:

enable: true

template_path: "/app/custom/slack_message.tmpl"

telegram:

enable: true

template_path: "/app/custom/telegram_message.tmpl"

Persistent data store

Versus persists three things to disk via the file storage backend:

| File | Purpose |

|---|---|

incidents.json | Every incident received (rolling cap = max_incidents). |

patterns.json | AI-agent pattern catalog + services map. |

shadow.json | Append-only NDJSON log of shadow events. |

If you don't mount a volume, all three are written to the container's ephemeral filesystem and disappear on every pod restart, redeploy, or rescheduling event. The admin dashboard's incident history will look like it resets.

PersistentVolumeClaim

# versus-pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: versus-data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 5Gi

# storageClassName: gp3 # uncomment / set to your cluster's class

kubectl apply -f versus-pvc.yaml

Replicas vs. RWO. A

ReadWriteOncevolume binds to a single node. If you needreplicas > 1either (a) switch to aReadWriteManyclass (EFS, Filestore, Azure Files) so every pod writes to the same directory, or (b) keepreplicas: 1and use aRecreatedeployment strategy. Sharing one RWO PVC across multiple pods will cause file corruption.

Deployment

# versus-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: versus-incident

spec:

replicas: 1 # see PVC note above before bumping this

strategy:

type: Recreate

selector:

matchLabels:

app: versus-incident

template:

metadata:

labels:

app: versus-incident

spec:

containers:

- name: versus-incident

image: ghcr.io/versuscontrol/versus-incident

ports:

- containerPort: 3000

livenessProbe:

httpGet:

path: /healthz

port: 3000

readinessProbe:

httpGet:

path: /healthz

port: 3000

env:

- name: GATEWAY_SECRET

valueFrom:

secretKeyRef:

name: versus-secrets

key: gateway_secret

- name: SLACK_TOKEN

valueFrom:

secretKeyRef:

name: versus-secrets

key: slack_token

- name: SLACK_CHANNEL_ID

valueFrom:

secretKeyRef:

name: versus-secrets

key: slack_channel_id

volumeMounts:

- name: versus-config

mountPath: /app/config/config.yaml

subPath: config.yaml

- name: versus-data

mountPath: /app/data

volumes:

- name: versus-config

configMap:

name: versus-config

- name: versus-data

persistentVolumeClaim:

claimName: versus-data

---

apiVersion: v1

kind: Service

metadata:

name: versus-service

spec:

selector:

app: versus-incident

ports:

- protocol: TCP

port: 3000

targetPort: 3000

kubectl apply -f versus-deployment.yaml

Exposing the dashboard

Set public_host in the config to the external URL clients (and the

admin dashboard's banner) should use. Then expose the Service via your

preferred path — Ingress, LoadBalancer, or kubectl port-forward for

local testing:

kubectl port-forward svc/versus-service 3000:3000

# open http://localhost:3000/

Next steps

- Admin Dashboard — what the UI surfaces and how to rebuild the bundled assets.

- Configuration — every config key, env var, and per-request query parameter.

- Helm Chart — packaged install.

Installing Versus Incident with Helm

This guide explains how to deploy Versus Incident using Helm, a package manager for Kubernetes.

Requirements

- Kubernetes 1.19+

- Helm 3.2.0+

- PV provisioner support in the underlying infrastructure (if persistence is required for Redis)

Installing the Chart

You can install the Versus Incident Helm chart using OCI registry:

helm install versus-incident oci://ghcr.io/versuscontrol/charts/versus-incident

Install with Custom Values

# Install with custom configuration from a values file

helm install \

versus-incident \

oci://ghcr.io/versuscontrol/charts/versus-incident \

-f values.yaml

Upgrading an Existing Installation

# Upgrade an existing installation with the latest version

helm upgrade \

versus-incident \

oci://ghcr.io/versuscontrol/charts/versus-incident

# Upgrade with custom values

helm upgrade \

versus-incident \

oci://ghcr.io/versuscontrol/charts/versus-incident \

-f values.yaml

Configuration

Quick Start Example

Here's a simple example of a custom values file:

# values.yaml

replicaCount: 2

alert:

slack:

enable: true

token: "xoxb-your-slack-token"

channelId: "C12345"

messageProperties:

buttonText: "Acknowledge Alert"

buttonStyle: "primary"

telegram:

enable: false

email:

enable: false

msteams:

enable: false

lark:

enable: false

Important Parameters

| Parameter | Description | Default |

|---|---|---|

replicaCount | Number of replicas for the deployment (set to 1 when agent.enable=true or persistence is enabled) | 2 |

config.publicHost | Public URL for acknowledgment links | "" |

gatewaySecret | Shared secret for /api/admin/* and /api/agent/*. Empty value leaves admin routes unregistered. | "" |

storage.type | Storage backend (only file is implemented today) | "file" |

storage.file.dataDir | Directory for incidents, pattern catalog, detect log | "/app/data" |

storage.persistence.enabled | Mount a PVC at storage.file.dataDir | false |

agent.enable | Enable the AI SRE Agent | false |

agent.mode | training, shadow, or detect | "training" |

agent.ai.enable | Forward unknown / spike patterns to the LLM | false |

agent.ai.apiKey | OpenAI API key (stored in the chart Secret) | "" |

alert.slack.enable | Enable Slack notifications | false |

alert.slack.token | Slack bot token | "" |

alert.slack.channelId | Slack channel ID | "" |

alert.telegram.enable | Enable Telegram notifications | false |

alert.email.enable | Enable email notifications | false |

alert.msteams.enable | Enable Microsoft Teams notifications | false |

alert.lark.enable | Enable Lark notifications | false |

oncall.enable | Enable on-call functionality | false |

oncall.provider | On-call provider ("aws_incident_manager" or "pagerduty") | "aws_incident_manager" |

redis.enabled | Enable bundled Redis (required for on-call) | false |

Notification Channel Configuration

Slack

alert:

slack:

enable: true

token: "xoxb-your-slack-token"

channelId: "C12345"

messageProperties:

buttonText: "Acknowledge Alert"

buttonStyle: "primary" # "primary" (blue), "danger" (red), or empty (default gray)

disableButton: false

Telegram

alert:

telegram:

enable: true

botToken: "your-telegram-bot-token"

chatId: "your-telegram-chat-id"

alert:

email:

enable: true

smtpHost: "smtp.example.com"

smtpPort: 587

username: "your-email@example.com"

password: "your-password"

to: "alerts@example.com"

subject: "Incident Alert"

Microsoft Teams

alert:

msteams:

enable: true

powerAutomateUrl: "your-power-automate-flow-url"

otherPowerUrls:

dev: "dev-team-power-automate-url"

ops: "ops-team-power-automate-url"

Lark

alert:

lark:

enable: true

webhookUrl: "your-lark-webhook-url"

otherWebhookUrls:

dev: "dev-team-webhook-url"

prod: "prod-team-webhook-url"

On-Call Configurations

AWS Incident Manager

oncall:

enable: true

waitMinutes: 3

provider: "aws_incident_manager"

awsIncidentManager:

responsePlanArn: "arn:aws:ssm-incidents::111122223333:response-plan/YourPlan"

otherResponsePlanArns:

prod: "arn:aws:ssm-incidents::111122223333:response-plan/ProdPlan"

dev: "arn:aws:ssm-incidents::111122223333:response-plan/DevPlan"

redis:

enabled: true

auth:

enabled: true

password: "your-redis-password"

architecture: standalone

master:

persistence:

enabled: true

size: 8Gi

PagerDuty

oncall:

enable: true

waitMinutes: 5

provider: "pagerduty"

pagerduty:

routingKey: "your-pagerduty-routing-key"

otherRoutingKeys:

infra: "infrastructure-team-routing-key"

app: "application-team-routing-key"

db: "database-team-routing-key"

redis:

enabled: true

auth:

enabled: true

password: "your-redis-password"

architecture: standalone

master:

persistence:

enabled: true

size: 8Gi

Redis Configuration

Redis is required for on-call functionality. The chart can either deploy its own Redis instance or connect to an external one.

External Redis

redis:

enabled: false

externalRedis:

host: "redis.example.com"

port: 6379

password: "your-redis-password"

insecureSkipVerify: false

db: 0

Custom Alert Templates

You can provide custom templates for each notification channel:

templates:

slack: |

*Critical Error in {{.ServiceName}}*

----------

Error Details:

```

{{.Logs}}

```

----------

Owner <@{{.UserID}}> please investigate

telegram: |

🚨 <b>Critical Error Detected!</b> 🚨

📌 <b>Service:</b> {{.ServiceName}}

⚠️ <b>Error Details:</b>

{{.Logs}}

AWS Integrations

Versus Incident can receive alerts from aws sns systems:

AWS SNS

alert:

sns:

enable: true

httpsEndpointSubscriptionPath: "/sns"

Uninstalling the Chart